The American Ivy League and top-tier research institutions are no longer debating if AI should be used in graduate research, but how. As of early 2026, Harvard’s Faculty of Arts and Sciences and Stanford’s Institute for Human-Centered AI (HAI) have moved beyond simple prohibitions, establishing “Structured Integration” frameworks that define the modern standard for academic integrity.

For the US graduate student, these standards represent a shift from “AI as a ghostwriter” to “AI as a research collaborator.” At Stanford, the “AI Golden Rule” now mandates that any output shared must be treated with the same responsibility as if the student authored it entirely from scratch. This level of accountability is raising the bar for what constitutes an “original” thesis in the digital age.

The 2026 Standard: Human-in-the-Loop Methodology

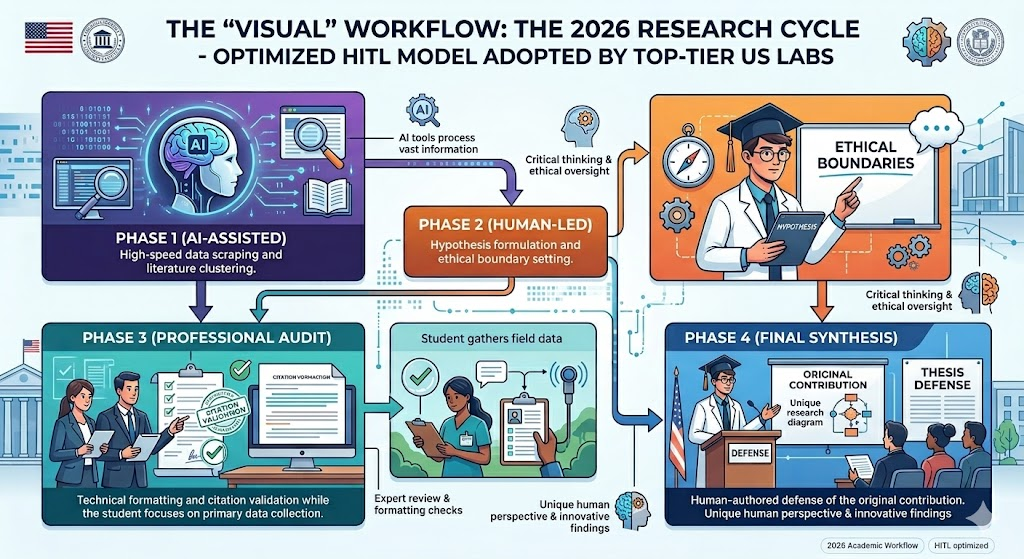

To maintain E-E-A-T (Experience, Expertise, Authoritativeness, and Trustworthiness), top labs now require a “Human-in-the-Loop” (HITL) approach. This ensures that while AI may process data, the critical synthesis—the “Expertise” component—remains uniquely human.

In practice, this means students are utilizing specialized dissertation help USA to audit their AI-generated citations and ensure that their logical frameworks don’t fall victim to “algorithmic bias.” By seeking professional human oversight, scholars can bridge the gap between raw AI speed and the rigorous “Authoritativeness” required by a Harvard or Stanford defense committee.

See also: Technology and the Rise of Remote Work

Methodology: How We Gathered This Data

To provide this analysis, our content strategy team at MyAssignmentHelp conducted a 2026 audit of publicly available AI policy updates from 15 “R1” research universities in the US. Our data reflects the transition from “Detection-Based” policing to “Disclosure-Based” integrity.

During our research, we noted that many students choose to do my homework through structured academic support platforms to ensure that their foundational coursework meets the same high-integrity standards required for their final thesis. We cross-referenced these findings with the latest “AI Literacy Frameworks” published by Stanford Teaching Commons and Harvard’s metaLAB to ensure total accuracy.

Is it ethical to use AI for my PhD?

Yes, but only with full disclosure and human-led synthesis. According to the 2026 consensus among US Graduate Schools, AI is an ethical tool for brainstorming, data cleaning, and literature mapping. However, submitting AI-generated prose as your own work remains a violation of academic integrity. The “ethics” lie in the transparency of the process; you must document which tools were used and how you verified the accuracy of the output.

Key Takeaways

- Structured Integration: Harvard and Stanford now favor “Structured Integration” over total AI prohibition.

- Mandatory Disclosure: 2026 guidelines require an “AI Use Disclosure Statement” in the preliminary pages of any dissertation.

- Human Accountability: You are personally responsible for any “hallucinations” or inaccuracies produced by an AI tool used in your research.

FAQ Section

Q: Do AI detectors actually work in 2026?

Most Tier-1 US universities have moved away from “sole-source” detection. Because AI detectors can be unreliable, institutions now use “Iterative Drafting” checks, where students must show the evolution of their work over time.

Q: Can I use AI to write my literature review?

You can use AI to organize the literature, but the narrative synthesis must be your own. Professional support is often used here to ensure that the human “voice” remains dominant.

Q: What is the penalty for undisclosed AI use?

Under 2026 standards, undisclosed AI use is treated as “Research Misconduct,” which can lead to expulsion or the revocation of a degree even after graduation.

Author Profile

Lachlan Miller specializes in the intersection of US EdTech and academic policy at MyAssignmentHelp. With a background in structural engineering and a Ph.D. in Education Strategy, he leads the team in developing “AI-Proof” research methodologies for graduate students. Lachlan is a recognized voice in the 2026 regulatory shifts, focusing on maintaining human ingenuity in an automated world.

References

- Stanford University (2026). “AI Guidelines for Marketing, Communications, and Research.”

- Harvard University Information Technology (2026). “Generative AI Guidelines for Academic Integrity.”

- FIU Graduate School (2026). “Guidance for the Effective and Responsible Use of AI in Dissertations.”

- OpenEduCat (2026). “AI and Academic Integrity: A Practical Guide for Universities.”